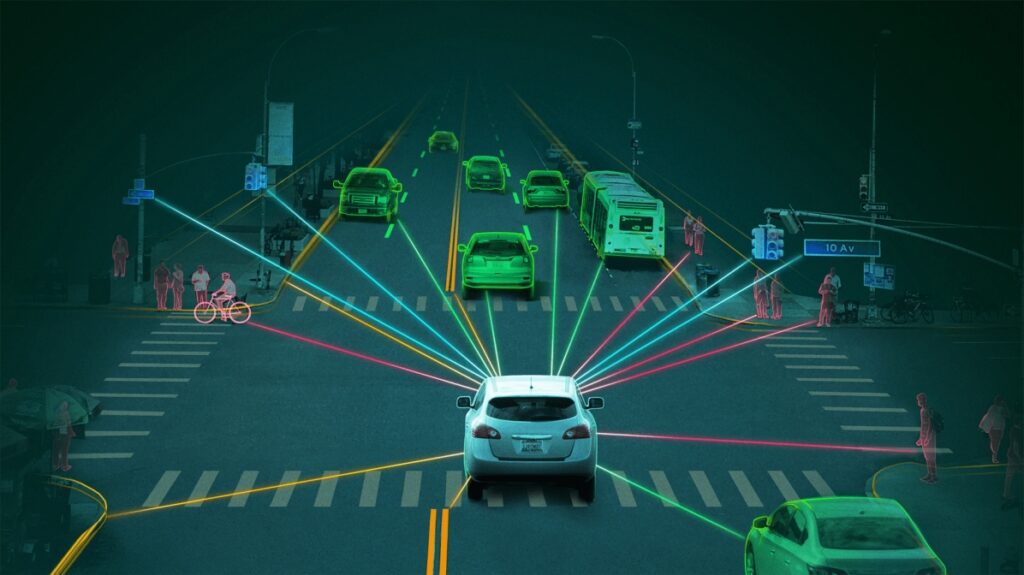

Autonomous vehicles (AVs) are transforming the future of transportation by leveraging artificial intelligence (AI) to make real-time driving decisions. However, as AI takes control, ethical questions emerge about responsibility, bias, and decision-making in critical situations. Should an AV prioritize passenger safety over pedestrians? Who is liable in case of accidents? How do AI biases impact road safety? Addressing these ethical concerns is essential for widespread adoption and trust in self-driving technology.

Key Ethical Considerations in Autonomous Vehicle AI

1. The Trolley Problem: Decision-Making in Life-Threatening Scenarios

- One of the most debated ethical dilemmas in AVs is the trolley problem, where an AV must choose between two harmful outcomes, such as:

- Swerving to avoid a pedestrian but risking passenger injury.

- Protecting passengers at the expense of a pedestrian’s life.

- Ethical frameworks for AVs must balance human life value, but opinions vary across cultures and legal systems.

- Example: Studies show cultural differences in preferences, with some societies favoring protection of young over elderly individuals.

2. Liability and Accountability in Accidents

- When an AV causes an accident, who is responsible—the car manufacturer, software developer, or vehicle owner?

- Traditional legal systems hold human drivers accountable, but AI shifts responsibility to corporations.

- Possible solutions:

- Product liability laws making manufacturers responsible.

- Insurance-based models where AI-driven cars have dedicated liability coverage.

- Government oversight to enforce safety standards and compensation mechanisms.

3. Bias in AI Decision-Making

- AI learns from real-world driving data, but biased datasets can lead to discriminatory driving behavior.

- AVs may unintentionally prioritize certain groups over others based on race, gender, or socioeconomic factors.

- Example: Some facial recognition systems have lower accuracy for darker skin tones, potentially leading to biased pedestrian detection.

- Solution: AI developers must train models on diverse datasets and ensure bias audits for fairness.

4. Privacy and Data Security in Autonomous Vehicles

- AVs collect massive amounts of personal data, including:

- Passenger locations and routes.

- Driving patterns and biometric data (if integrated with facial recognition).

- Privacy risks include data breaches, government surveillance, and misuse of driving habits for advertising.

- Ethical AV development requires:

- Strict data encryption and cybersecurity protocols.

- User consent for data collection and sharing.

- Legislation to prevent misuse of location tracking and personal information.

5. Ethical Considerations in AV Programming and Regulation

- Governments and car manufacturers must decide who programs ethical decision-making rules for AVs.

- AVs could follow:

- Utilitarian ethics (minimizing total harm).

- Passenger-priority ethics (protecting those inside the vehicle first).

- Legally defined rules (enforcing road laws at all costs).

- Regulation challenges:

- Lack of international consensus on ethical programming standards.

- Difficulty in coding morality into AI, as human ethics are complex and situational.

Potential Solutions and Ethical Frameworks for AI in Autonomous Vehicles

1. Global Ethical Standards for AV Decision-Making

- Governments and AI researchers must establish universal ethical guidelines to standardize AV decision-making.

- Collaborative efforts between tech companies, policymakers, and ethicists can help create transparent AI policies.

2. Human-in-the-Loop Systems

- Fully autonomous AI raises ethical concerns, so incorporating human oversight can improve decision-making.

- Example: Semi-autonomous systems where AI consults a remote human operator in high-risk scenarios.

3. Explainable AI (XAI) for AVs

- AVs should provide clear explanations of how AI makes decisions.

- Transparency in AI algorithms can improve public trust and legal accountability.

4. Ethical AI Audits and Testing

- Governments can mandate ethics-focused AI testing before AV deployment.

- Companies should conduct bias audits and real-world scenario tests to ensure fairness in AI decisions.

5. Strong Legal Frameworks for AV Regulation

- Governments need to define clear liability laws to determine who is accountable in accidents.

- Companies should implement data protection laws to secure passenger privacy.

The rise of AI-driven autonomous vehicles presents profound ethical challenges that require collaboration between governments, AI researchers, automakers, and ethicists. Addressing issues such as bias, liability, privacy, and decision-making frameworks is crucial to ensuring AVs operate safely and fairly in society.

From Our Editorial Team

Our Editorial team comprises of over 15 highly motivated bunch of individuals, who work tirelessly to get the most sought after curated content for our subscribers.